Siri-ously Speaking: How Voice Activation and Text-to-Speech Really Works

November 11, 2011

When Apple

recently unveiled the iPhone 4S, many were surprised by the lack of

any external design change or hardware configuration. Yet for all

its surface-level sameness, it's since become apparent that the

phone's true beauty is much more than skin deep.

When Apple

recently unveiled the iPhone 4S, many were surprised by the lack of

any external design change or hardware configuration. Yet for all

its surface-level sameness, it's since become apparent that the

phone's true beauty is much more than skin deep.

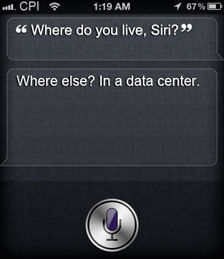

We're talking of course about "Siri," a mild-mannered,

informative and surprisingly witty personal assistant baked

straight into the operating system of each new iPhone.

Press a button, ask a question (out loud) and get an answer (yup,

out loud), almost instantly. Same goes for reminders, text

messages and more - simply ask (or tell, nicely!) Siri to do

something, sit back and listen to the sage advice of one wise lady

(did we mention the computer-generated voice is female?)

But just what makes Siri tick? Software, and lots of it. Truth

be told, voice activation and voice recognition software has been

in the works (and in use) for some time now. Siri's just the latest

in a line of technology raising the bar on how intuitive voice

recognition between us (humans) and them (computers) can be.

Here's a quick, nuts and bolts breakdown of the technology at

play:

- We verbalize a command or question to our handheld device. That

information is captured as audio and routed over the internet to a

far-off data center.

- Once there, the audio information is funneled (or in our case,

routed through superior cable management) to

stacks and stacks of servers (resting comfortably in racks, cabinets or wall-mount systems).

- Inside these servers, complex computations begin to take

effect. First, automatic speech recognition (ASR) software

transcribes our speech into text. This text is then processed,

tagged and parsed.

- The information within that text is then analyzed for content

and a (hopefully) acceptable answer or resolution is

formulated.

- That answer is then transformed back into natural language

text, and using text-to-speech software, synthesized back into

device-generated speech.

All in a matter of seconds, the entire process runs its course

(sound waves > phone > data center > back to phone = you,

amazed!). The glue that holds it all together? The data center. The

glue that holds the data center together? Who else? Chatsworth

Products, Inc. Jeff Cihocki, eContent

Specialist